When Is The Right Time To Refurbish Your Datacentre?

For many organisations, there is no right time to refurbish their server room or datacentre environments. In use 24/7 means that any refresh whether of a critical system or the entire facility is going to result in a period of downtime. However, most facilities are designed with a set working lifetime of 10-15 years and this links directly back to the critical systems in operation. Whilst they may be well maintained and regularly tested, the fact is that around years 8-10 critical power and cooling systems are ready for an upgrade or replacement.

Energy Efficiency

Over the last 10 years there has been a greater focus on energy efficiency and how to improve this. The benefits for the server room or datacentre operator are lower electricity bills. From a wider perspective, greater energy efficiency lowers carbon footprints for corporate bodies and contributes towards climate change objectives at a national level.

Probably the biggest driver towards energy efficiency has been the adoption of energy efficiency metrics such as Power Usage Effectiveness (PUE) and its reciprocal Data Centre infrastructure Efficiency (DCiE) developed by The Greed Grid and published in 2016 within the global standard ISO/IEC 30134-2:2016. For more information on PUE and DCiE visit: https://www.thegreengrid.org/.

Green Washing

PUE and to some extent DCiE have become industry standard metrics for energy efficiency and amongst the datacentre community the metrics or ratios have been used to differentiate one colocation operator from another. In a world moving to hyperscale cloud-based services, does this make a difference? Typical co-location (colo) datacentre operators seem to think so and one can witness PUE marketing in action when comparing locations and what they offer.

However, used as intended PUE and DCiE in themselves are more than a ‘green washing’ exercise. The formulas for the metrics measure the energy used to power IT loads against the total power used by the facility. The formulas are:

- PUE = Total Facility Power / IT Equipment Power

- DCiE = IT Equipment Power / Total Facility Power

At a datacentre level, where the facility has a sole purpose to provide a managed and secure environment, the measures make sense. For a server room which may be part of a building used for a range of other on-site services, the server room’s power and energy demands need to be metered independently.

The regular metering of energy and calculation of PUE (and DCIE) provides a baseline for comparison. For organisations interested in their energy usage and spend on electricity the metrics provide a positioning statement of ‘where are we now’ or ‘how energy efficient is our IT operation’. With power densities increasing in server racks, any increase in electricity usage for a less energy efficient operation means more wasted energy and demand on the cooling systems.

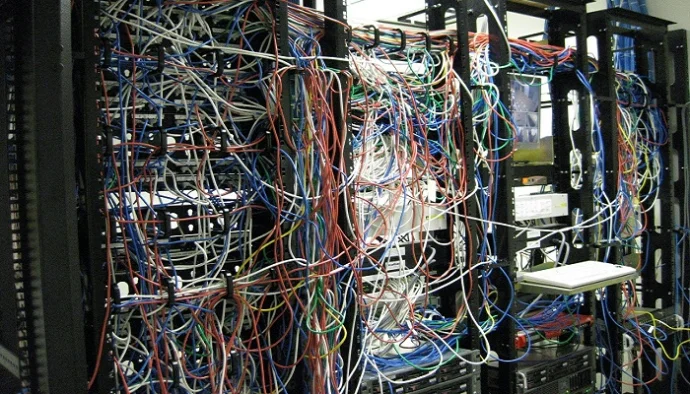

Critical Infrastructure System Upgrades and Refurbishment

Turning back to infrastructure systems, the two primary systems within a server room or datacentre including the critical power protection and cooling systems. Both have undergone developments and innovations over the last 10 years to improve their availability, scalability and operational energy efficiency.

Over 10 years ago, a three-phase uninterruptible power supply was typically a mono block design operated as a single unit or in a parallel N+X arrangement for resilience. The design may have been transformer based or a transformer-less design. The energy efficiency of the UPS system would be around 85-90% at between 80-100% load with the overall efficiency reducing as the load reduced. Load reduction can have been achieved through the adoption of virtualisation or server utilisation and load balancing.

Today’s mono block UPS systems tend to be transformer less, though there are also improved transformer-based systems using high efficiency transformers. In on-line mode UPS systems can now achieve from 96-98% online operating efficiency and over a wider load profile from 25-100% loaded. Modular UPS systems have also become extremely popular allowing the site to right-size the UPS:load profile from day one whilst using additional modules to provide rapid scalability.

Cooling solutions for server rooms and datacentres have also seen developments to improve their energy efficiency. There have also been developments in the technologies including liquid cooling systems and the use of containment to improve air flows and overall efficiency.

These critical infrastructure systems both typically have 10-year design lives and that is with regular annual preventative maintenance. A UPS system for example will typically require a capacitor and fan refurbishment around years 8-10. Even with the batteries replaced every 3-4 or 7-8 years (dependent upon whether they are 5 year or 10-year design life batteries), the UPS will become less reliable over time.

Unlike UPS, cooling systems must be maintained annually dependent upon the type of refrigerant used. As part of the HVAC system there cooling system may be supporting the entire facility as well as the server room or datacentre IT space. Within the server area, a failure of the cooling system could be catastrophic and present a potential fire risk.

If a UPS or cooling system is at or over the 10-year mark, then it is time for refurbishment. Up to this point in time, any increase in the frequency of alarms or failures should also be taken as a sign that it is time to replace the system.

Summary

There is a benefit to running critical infrastructure systems up to the 10-year mark. Energy efficiency and overall system innovations during the period will be extensive, leading to large potential savings in CAPEX and in OPEX. Due to advancements in design and electronics, systems typically compact and become more energy efficient. Space is saved, capital costs fail and running costs improve through lower power usage and improved energy efficiency.

Other benefits from refurbishment programs include the option of a wider review to capitalise on the latest innovations and approaches. For example energy storage systems are becoming more widely used to store energy from the grid at off-peak times (lower charges) or from local renewable wind turbine or solar PV power plants. Advances in battery technologies including lithium-ion batteries and their coupling to an uninterruptible power supply allow the datacentre to use the grid as a backup rather than a primary supply or provide the option to use the UPS to generate revenue as part of a National Grid demand side response (DSR) program.

There is little doubt as to the need to constantly review a server room and datacentre for refurbishment and upgrade. This should be planned for at least within a 10-year period and acted upon sooner if system alarms and failures become more frequent. With advances in technology, payback periods for a complete server room or datacentre refurbishment can be as fast as within a 3-5year time frame and if you’ve used PUE as your benchmark then you will easily be able to compare the metric before and after the datacentre or server room refurbishment project.