How Datacentre Temperature Monitoring Solutions Can Improve Energy Efficiency

There are several ways to manage heat within a datacentre, computer or server room, and improve operational efficiency. Some are relatively easy and straightforward, and others require a little more planning and capital investment. Collectively, they can not only help improve operational efficiency and save costs but can help to extend the life of the assets and reduce their service costs.

Managing Airflow at the Rack and Room Levels

Managing the airflow within a server room must be considered at the room and rack level. If we take a typical server rack for example and the equipment within it. Servers are designed to cool from their front to rear. Built-in fans draw cool air into the front of the server and flow this over the higher temperature components and areas i.e. the microprocessor circuits, and exhaust this through the back of the unit. The same arrangement is used for any device with a built-in switch mode power supply (SMPS) and other devices. For example, uninterruptible power supplies (UPS) will draw air in from the front of the unit and flow this over their heatsinks and other high energy circuits to exhaust through the rear.

The airflow to server racks and cabinets should also considered front to rear. Air conditioned and cooled air should be presented to the front of the racks and from the rear hotter air will be expelled. Cool air is typically supplied by a wall mounted split air conditioner in a computer room and this is generally installed towards the ceiling and at the rear of the racks. The air conditioner (AC) unit will push cool air into the room over the server racks and as cold air falls, will be drawn into the front of the racks. Exhaust air will be hotter (hot air rises) and be drawn into the AC unit from where it will be cooled via the external condenser unit. The condenser is typically situated from 30-50m away from the server room on an external wall or roof and may be installed with a weather-protective canopy. Whilst most server rooms use air-cooled units, chilled-water can also be an option including chilled-water doors which sit on the rear of the server cabinets or in-row units.

Within the server rack it is important to manage the air flow from front to rear and make sure that it is as efficient and positive as possible. Blanking plates should be used for any unused space in the front of the server rack and side panels attached, sealed in place, to ensure that hot air can only exit via the rear of the unit. Front doors and rear doors should be in place to not only prevent unauthorised access but to assist with air flow management. Internal fan units can be used to improve airflow either shelf or top-of-rack mounted. In a room with multiple server-racks a hot-aisle/cold-aisle arrangement may be considered. This effectively places the server racks in a management environment within the server room.

The rack level air flow performance will also be affected by the layout of the racks and the room itself. Inside the server room, air flow should not be impeded and there should be sufficient intake and return vents to prevent negative pressures. Doors should also be monitored to ensure that they are closed and not left ajar.

In terms of efficient cooling, portable air conditioners should only be considered as a temperature measure; if an AC unit fails or needs taking out of service for maintenance or there is a heatwave. Typical problems with their usage include the emptying of their condenser trays (daily) and the need to run a hot air exhaust pipe outside the room. For more installations this means via a local door or window which is not sealed. A professionally designed cooling system should allow for N+1 redundancy in the design, allowing for a unit to be taken out of service or both systems to supply sufficient cooling for worst case scenarios i.e. a prolonged summer heatwave.

Monitoring Ambient Air Temperatures

The optimum air temperature within a server room is 18-27°C with 18-25°C being the preferred temperature if UPS systems are installed with valve regulated lead acid (VRLA) batteries. VRLA batteries are sensitive to temperature and whilst their short-term performance rises with temperature in terms of runtime, their working life is greatly reduced. For each one degree rise above 30°C, design life can halve.

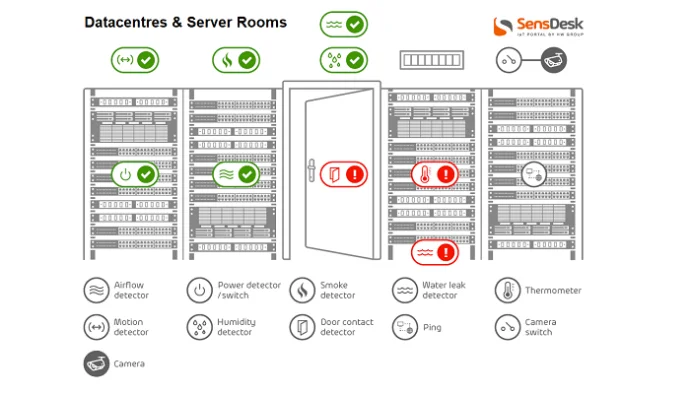

An environmental monitoring device should be installed to monitor room ambient temperatures. The monitoring device may be powered via Power over Ethernet (PoE) or a plug-in AC/DC adapter and connects to the local are network via an Ethernet/IP connection. External sensors can be connected to the device for temperature, temperature and humidity, water leakage, smoke, fire, access, and other environmental conditions. If the data collected by the monitoring device is outside a set range, an email or text alarm message can be automatically issued to a distribution list. Monitoring software, whether on-premise server or Cloud-based also allows remote interrogation of the sensors and monitoring device. It is important to note that room temperatures will vary dependent on room layout and multiple sensors may be required. There may be hot and cold spots, depending on airflow and cooling system design performance.

Within the server racks themselves, restricted air flow and the heat output from server and other electronic devices will lead to higher ambient temperatures. It is even more vital to monitor these with additional sensors that can be connected to the central environmental monitoring devices. Ideally each rack should have top, middle and bottom, front and rear sensors to gather sufficient data with which to monitor the entire cabinet arrangement. In addition to temperature and humidity sensors, airflow detectors can also be deployed and connected to the monitoring device and a Cloud monitoring portal.

Thermal Imaging Surveys

Thermal cameras create images using infrared radiation and can be used to identify hot and cold spots within a room or component systems within it. A complete thermal imaging survey can be carried out as part of preventative maintenance visit or as a site-specific service. A thermal image survey can be used to detect the temperature within server racks, electrical and mechanical systems, LV switchgear and cabling, UPS systems and battery packs and IT servers. The camera shows hotter areas in red, and cooler areas in blue. A thermal imaging survey is a relatively low cost monitoring service but only captures a the ‘heat-map’ of a room at the time of the survey. The information can be used to detect potential areas of concern and identify critical systems in need of attention. For example high heat in electrical conductors can indicate electrical overloads, harmonics and potential component failure. In terms of managing heat within a computing facility, the ideal heat-map should show few if any ‘hot-spots’ or areas of concern as this means a lower heat-load for the air conditioning system to cool.

Energy Efficiency

Within the last few years, the energy efficiency of the equipment typically installed has risen exponentially. Most servers and related networking devices are replaced every 3-5 years to take advantage of new software and services.

Heat is a sign of wasted energy and though it is generally accepted that a server room and/or rack will require cooling, few consider the heat load on the air conditioners from critical infrastructure systems. This will include UPS systems, air conditioners and lighting which can be left in-situ for 10 years or longer before replacement. Therefore, when considering how best to cool a server room or datacentre, it is important to consider these supporting systems for upgrade.

LED Lighting can be used to replace older fluorescent tube lighting. Led lighting can achieve from 25% or more energy saving and last up to 20 times longer. A modern transformer-less UPS system will operate at around 97-98% efficiency compared to an older transformer-based design of 80-88%. The larger the UPS system, the greater the reduction in heat load from upgrading.

Power Usage Effectiveness (PUE) is the prime metric used to track energy efficiency in a datacentre and the principle one used by Google. For server rooms PUE can also be calculated in the exact same way. PUE is an important metric as any change in the critical infrastructure i.e. a system upgrade or change to how air is managed within a computer or server room, will impact the PUE calculation either positively or negatively. PUE not only provides a way to monitor current energy efficiency but also forecast planned changes. PUE = Total Facility Power / IT Equipment Power and the closer PUE is to 1.0 the more energy efficient the server facility it.

More information: https://www.google.co.uk/about/datacenters/efficiency/

Waste Heat Recovery and Usage

When the heat output within a server room or datacentre is large enough it can be captured and repurposed. This is a practice being adopted in several countries in Europe and most notably the Scandinavian countries with hyperscale-type datacentre facilities. The heat can be captured in hot-aisles and via the cooling system deployed and transferred by heat pumps into a higher temperature system. Typical uses include district heating schemes. In smaller facilities there is no reason why this large scale concept should not be adopted to heat other areas of a building.

More information: https://international.eco.de/topics/datacenter/white-paper-utilization-of-waste-heat-in-the-data-center/

Summary

Our projects team are sometimes asked whether an extractor fan can cool a server room and the simple answer is ‘no’. There is far more to managing a critical environment and ensuring its ambient temperature is monitored and controlled. With the right design, planning and systems, a server room does not have to be costly to operate and can deliver the levels of resilience required by its organisation, both the short and long term. For legacy server rooms a critical infrastructure system upgrade can often to a degree be self-financing in terms of energy savings and reduced operating costs.